#CloudGuruChallenge – Event-Driven Python on AWS

Overview

The motivation that takes me to do this challenge the principal reason was that at my work they told me that we will gonna start using python for the next projects and also I wanted to start doing more projects using AWS technologies, so I start looking for resources to learn both thing I don't know how I got to CloudGuru but I got there. So I saw that they publish new challenges every moth using AWS so I read the challenge: Event Driven Python and the awesome thing was that the challenge had python which i felt great because I wanted to learn python.

About the challenge

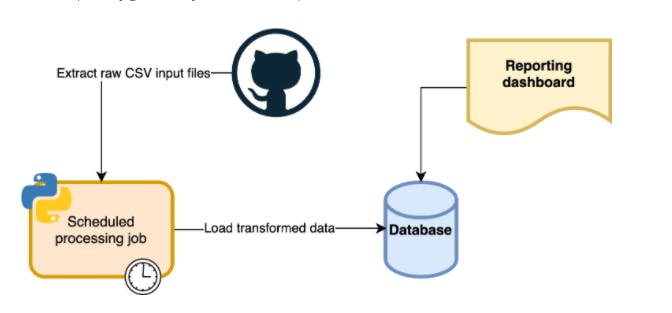

The project aim to develop an ETL processing to get COVID data from these two csv: NYC Times and Johns Hopkins using python and AWS cloud services. The representation of the project descripted in a image:

How I did it?

The first step that I use to start working on this project was taking a look at the data that i was gonna use. Seems I didn't know anything abut python, I start looking to resources on how to read csv using python. With 3 days I feel confident to start coding with python after learning the big picture of python. The library that I used to manipulate the csv in python was pandas so joining the 2 dataset was easy and the documentation on the internet was super helpful. After having my final data ready to be on a database. I created a serverless application using Serverless so I split the code into 4 modules in python:

ETL (to handle the extraction, transformation and load of the data)

DB (to handle the connection & insertion to the data to the database)

Utils (to handle any kind of function that I can use across my project)

Notify (to send notification whenever a data get's update or something failed in the code)

The big challenge

A big challenge for me was spinning up the database using Terraform but I found that using terraform was more fun than using the GUI in Amazon. I would highly recommend to learn terraform using the documentation that the official page provide: Terraform if you are curious to know how to get the name of the resources when using terraform the previous page can provide that info.

Final Result

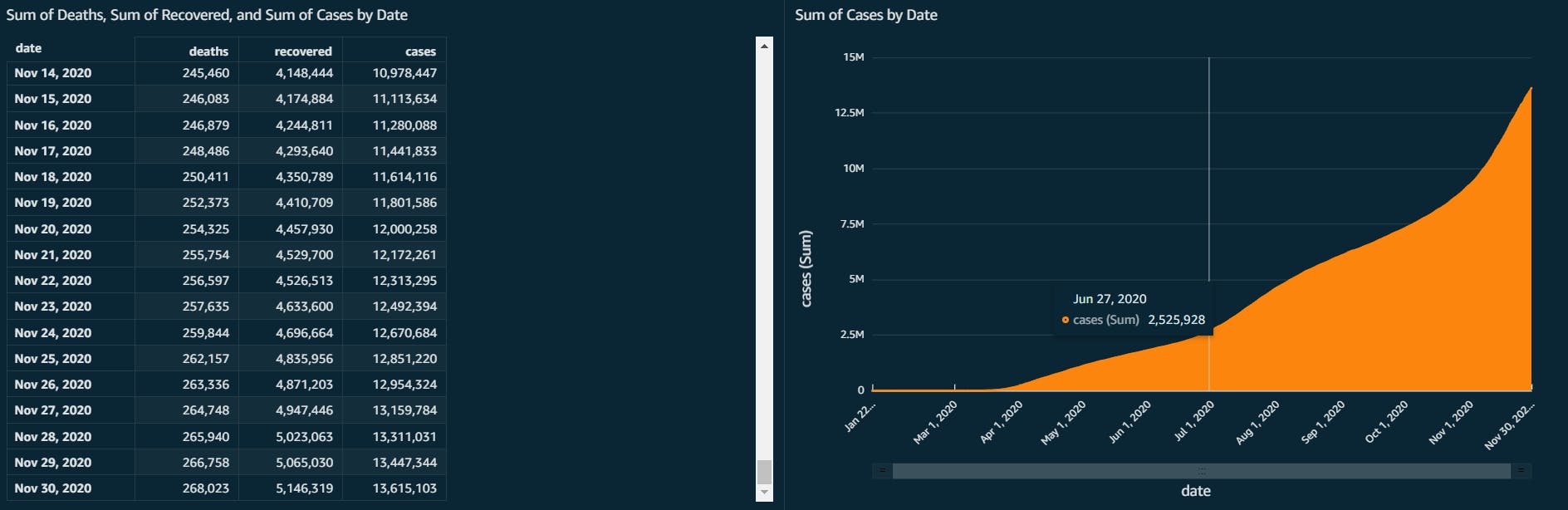

In order to show the data that we insert into the database we had to use a tool to visualize our data, i found that with AWS Quicksight was super easy to do it.

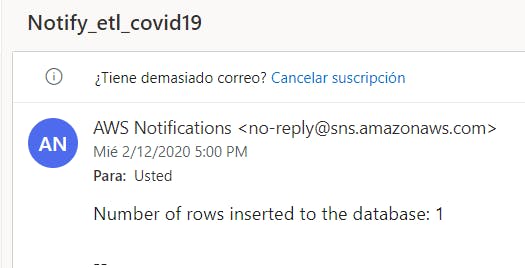

And the best thing is the notification that SNS send me every time my DB gets update:

Things I learnt

I'm so grateful about this challenge, I have learnt so many things that I will mention next:

Python

Lambda

SNS

Cloudwatch

Terraform

What's next?

As I'm still in my first steps of learning all of the technologies that I mention previously, I will continue my journey and doing awesome challenge as this one to keep expanding my knowledge.

I will study now more about CI/CD that's one of the missing points that i didn't use in the challenge but will definitely put that on action.

Github: EtlCovid19

Linkedin: Jose Hidalgo